Details

-

Task

-

Status: Closed (View Workflow)

-

Major

-

Resolution: Done

Description

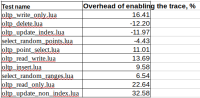

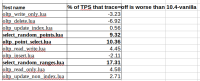

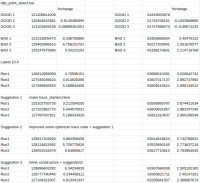

We need to benchmark how much slowdown we experience when we add the optimizer trace to the

server.

We would need the comparision for:

1) server without the optimizer trace (current 10.4)

2) server with optimizer trace disabled here. (currently in branch 10.4-mdev6111)

The optimizer trace is currently disabled by default.

Please run sysbench.

Attachments

Issue Links

- is part of

-

MDEV-6111 optimizer trace

-

- Closed

-

- relates to

-

MDEV-18822 investigate performance regressions in 10.4

-

- Stalled

-