Details

-

Bug

-

Status: Closed (View Workflow)

-

Major

-

Resolution: Won't Fix

-

10.2.30

-

* MariaDB 10.2.30 with Galera 25.3.28(r3875)

* 3-node cluster (with one node receiving the majority of write operations)

* RHEL7, kernel 3.10.0, x86_64

* Virtual machines with 8 vCPU and 48 GB RAM

Description

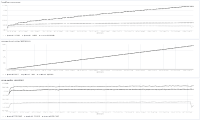

As you can see from the correlation_between_mysqld_memory_and_wsrep_open_transactions.jpg attachment, memory usage of the mysqld process increases indefinitely. One of our 3 nodes (rtp-arkt-ds01) receives the majority of write operations, and that's the node that see's the most rapid memory growth. Only a restart of mysqld releases the memory, so we're having to restart every few months.

When restarting mysqld, I noticed a high number reported for wsdb trx map usage in the log of the high-memory-usage node:

2019-03-29 5:55:21 140128830834432 [Note] WSREP: wsdb trx map usage 515216 conn query map usage 0

|

The wsrep_open_transactions status variable reports the same number, and there is a strong correlation between the memory usage of mysqld and wsrep_open_transactions (see correlation_between_mysqld_memory_and_wsrep_open_transactions.jpg).

On the other hand, there is no correlation with the memory_used status variable, which quickly levels out after a restart of msyqld, so I suspect the issue is only related to Galera replication.

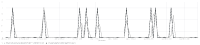

The other 2 nodes in the cluster receive a much slower rate of write operations than rtp-arkt-ds01. If I look at one of them, and plot the time derivative of wsrep_open_transactions and compare it with our application logs that indicate a non-Galera deadlock, there is a strong correlation: wsrep_open_transactions increases each time a non-Galera deadlock occurs. (By "non-Galera deadlock", I mean a deadlock resulting from competing writes to the same node.) This correlation is shown in the correlation_between_wsrep_open_transactions_and_non-galera_deadlocks.jpg attachment.

Note: Our application sees a lot of non-Galera deadlocks (thousands per day).

Given the correlation between mysqld memory usage and wsrep_open_transactions, along with the correlation between wsrep_open_transactions and non-Galera deadlocks, I suspected the non-Galera deadlocks were causing the memory leak. After some searching, I found MDEV-20914, which reports a test failure for galera.MW-328E when using a debug build of the Galera library. In the log output, it reports a wsdb trx map usage of 1 and fails due to a debug assertion about this transaction map being nonempty:

mysqld: galera/src/wsdb.cpp:54: galera::Wsdb::~Wsdb(): Assertion `trx_map_.size() == 0' failed.

|

Here is a modified version of the galera.MW-328E test that shows wsrep_open_transactions is nonzero after a non-Galera deadlock. Note that, just like galera.MW-328E, it's using two connections to the same node for the queries; it's not issuing any queries to the second node.

|

test |

--source include/galera_cluster.inc

|

|

|

|

|

# Create a second connection to node_1

|

--connect node_1a, 127.0.0.1, root, , test, $NODE_MYPORT_1

|

|

|

|

|

--connection node_1

|

CREATE TABLE t1 (i INT PRIMARY KEY, j INT) ENGINE=innodb;

|

CREATE TABLE t2 (i INT PRIMARY KEY, j INT) ENGINE=innodb;

|

|

|

INSERT INTO t1 VALUES (1, 0);

|

INSERT INTO t2 VALUES (2, 0);

|

|

|

SET autocommit = off;

|

START TRANSACTION;

|

UPDATE t1 SET j=1 WHERE i=1;

|

|

|

|

|

--connection node_1a

|

SET autocommit = off;

|

START TRANSACTION;

|

UPDATE t2 SET j=1 WHERE i=2;

|

|

|

|

|

--connection node_1

|

--send INSERT INTO t1 SELECT * FROM t2;

|

|

|

|

|

--sleep 2

|

|

|

|

|

--connection node_1a

|

--error ER_LOCK_DEADLOCK

|

INSERT INTO t2 SELECT * FROM t1;

|

|

|

|

|

--connection node_1

|

--reap

|

DROP TABLE t1, t2;

|

|

|

|

|

--connection node_1

|

--echo

|

--echo # WSREP transaction leaked on node_1 ...

|

SHOW STATUS LIKE 'wsrep_open_transactions';

|

|

|

|

|

--connection node_2

|

--echo

|

--echo # ... but not on node_2

|

SHOW STATUS LIKE 'wsrep_open_transactions';

|

|

result |

connect node_1a, 127.0.0.1, root, , test, $NODE_MYPORT_1;

|

connection node_1;

|

CREATE TABLE t1 (i INT PRIMARY KEY, j INT) ENGINE=innodb;

|

CREATE TABLE t2 (i INT PRIMARY KEY, j INT) ENGINE=innodb;

|

INSERT INTO t1 VALUES (1, 0);

|

INSERT INTO t2 VALUES (2, 0);

|

SET autocommit = off;

|

START TRANSACTION;

|

UPDATE t1 SET j=1 WHERE i=1;

|

connection node_1a;

|

SET autocommit = off;

|

START TRANSACTION;

|

UPDATE t2 SET j=1 WHERE i=2;

|

connection node_1;

|

INSERT INTO t1 SELECT * FROM t2;;

|

connection node_1a;

|

INSERT INTO t2 SELECT * FROM t1;

|

ERROR 40001: Deadlock found when trying to get lock; try restarting transaction

|

connection node_1;

|

DROP TABLE t1, t2;

|

connection node_1;

|

|

|

# WSREP transaction leaked on node_1 ...

|

SHOW STATUS LIKE 'wsrep_open_transactions';

|

Variable_name Value

|

wsrep_open_transactions 1

|

connection node_2;

|

|

|

# ... but not on node_2

|

SHOW STATUS LIKE 'wsrep_open_transactions';

|

Variable_name Value

|

wsrep_open_transactions 0

|

Unfortunately, we only retain metric data for a few months, so I'm not exactly sure when this issue started. It may have always been present, and we may have just started noticing it after changes to our application resulted in more non-Galera deadlocks. I can say that the memory consumption issue has been present since at least MariaDB 10.2.19.

Attachments

Issue Links

- relates to

-

MDEV-22731 Galera: RQG: Assertion `trx_map_.size() == 0' failed in Wsdb on master node shutting down

-

- Closed

-