Details

-

Bug

-

Status: Closed (View Workflow)

-

Major

-

Resolution: Not a Bug

-

2.0.1

-

None

-

Debian 8

Description

Hello,

as described here, Markus asked me to open an issue as this may be a performance bug.

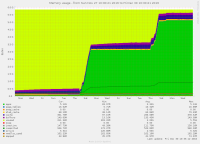

We recently increased connection idle timeouts on our clientside connection pools from low figures (10-15 minutes) to something more reasonable, e.g 2-3 hours, in order to cope with traffic spikes, with a pool size maxing out at 3k-4k total persistent connections.

This resulted in maxscale starting to slow down considerably the longer the connection pools stayed open, resulting in client response times increasing from low <10ms up to 1000ms or longer. During this, the amount of actual queries/sec or actual DB load did not increase at all, it was simply that the longer the persistent connection pools stayed open, the slower maxscale got. Queries themselves ran as fast as usual, maxscale simply took longer to get to processing the query. Clearing the persistent pool from client side immediately fixed the problem.

This was with a 2-CPU machine, with poll_sleep = 100 and non_blocking_polls = 10, epoll stats were showing

No. of epoll cycles: 532253223

|

No. of epoll cycles with wait: 27676568

|

No. of epoll calls returning events: 31331453

|

No. of non-blocking calls returning events: 3802205

|

No. of read events: 62667701

|

No. of write events: 62785204

|

No. of error events: 741

|

No. of hangup events: 25823

|

No. of accept events: 16595

|

No. of times no threads polling: 8

|

Current event queue length: 3

|

Maximum event queue length: 2563

|

No. of DCBs with pending events: 0

|

No. of wakeups with pending queue: 13685

|

No of poll completions with descriptors

|

No. of descriptors No. of poll completions.

|

1 20015023

|

2 4643493

|

3 2863016

|

4 1377815

|

5 773572

|

6 465835

|

7 297985

|

8 201369

|

9 144546

|

>= 10 548744

|

This particular instance was still running 2.0.1, but has since been upgraded to 2.0.2. As a temporary workaround to the solution I have simply increased the amount of CPUs for the virtual machine and am now observing what happens.